Data Profiling with Spark and YData

Ex corporate consultant of 12 years turned Soloprenuer. Having a blast every day and sharing little bits of info along the way.

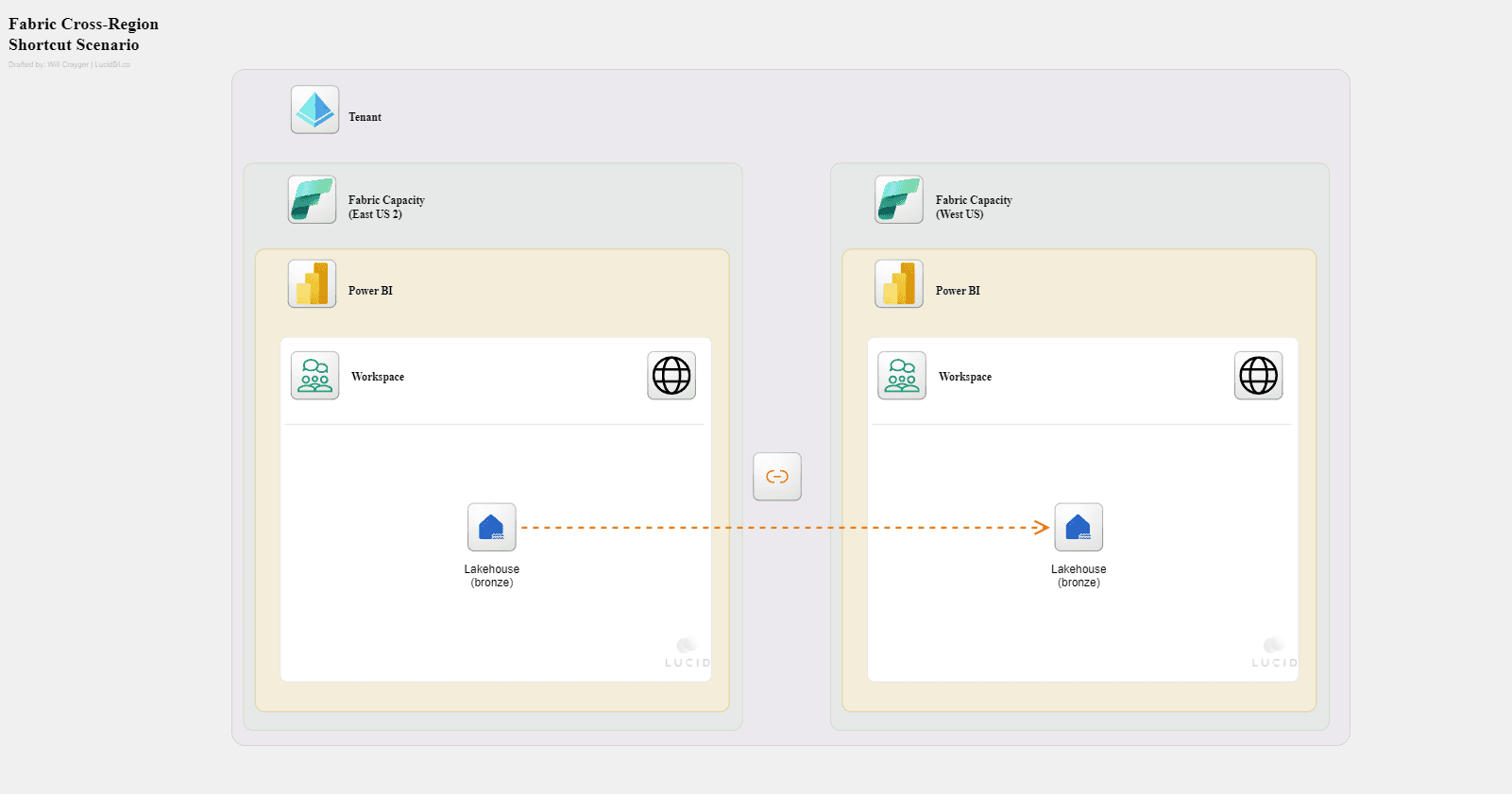

Specialized in data and analytics with a focus on the Microsoft tech stack (Azure, Fabric, Power BI

Data analytics often begins with profiling your data. Data profiling is simply the act of examining the raw source data to understand things like the structure and quality of the data.

As an engineer, understanding things such as the distribution of value, min/max, and unique occurances of values in the data you’re working with is very powerful. It helps you to better understand how to work with your data when considering joining tables, configuring incremental extract/load processes, and identifying the natural key of the table for dimensional modeling.

Data profiling can often be a long, tedious process. Thankfully, there are tools available to help expedite the process.

the Ydata library is one such tool and should be in everyones toolbelt.

The library itself is very robust; however, it can also be simplified to get a quick profile of your data. In addition, you have several options of what to do with the output once it’s generated such as rendering the html directly in your notebook or writing the output as JSON to a storage location.

With only a few lines of code you gain significant visibility into your data. For example, if we wanted to understand the makeup of our customers table:

Variables represent the columns of the table and can be explored in more detail. We can start to probe into the columns (variables) to find things like the uniqueness of that column:

From there we can probe even further to view the statistical breakdown of the column:

Or maybe we wanted to see the distribution of values:

Beyond these simple examples there are advanced settings allowing you to customize your exploration through configuration files and sample configurations available through the public github:

Below is a snippet to get you started.

%pip install ydata-profiling --q

from pyspark.sql.functions import col, when, lit

from datetime import datetime, timezone

from pyspark.sql.types import DecimalType, DateType, TimestampType, IntegerType, DoubleType, StringType

from ydata_profiling import ProfileReport

def profile_spark_dataframe(

df,

table_name

):

"""

Profiles a Spark DataFrame by handling null values, transforming the DataFrame, and generating a profiling report.

This function first processes the DataFrame by setting default values for null entries based on data type:

- Decimal fields are set to 0.0 if null.

- Date and Timestamp fields are set to January 1, 1900, if null.

The transformed Spark DataFrame is then converted to a Pandas DataFrame and passed to `ydata_profiling.ProfileReport`

to create a profiling report. The report can be returned either as an HTML string or as a `ProfileReport` object.

:param df: The Spark DataFrame to be profiled.

:return: Returns the report as a `ProfileReport` object.

"""

# Handle nulls by setting defaults before profiling

for field in df.schema.fields:

if isinstance(field.dataType, DecimalType):

df = df.withColumn(field.name, when(col(field.name).isNull(), lit(0.0)).otherwise(col(field.name).cast(DoubleType())))

elif isinstance(field.dataType, DateType) or isinstance(field.dataType, TimestampType):

df = df.withColumn(field.name, when(col(field.name).isNull(), lit(datetime(1900, 1, 1, 0, 0, 0))).otherwise(col(field.name)))

# Convert to Pandas dataframe

df = df.toPandas()

# Generate report

report = ProfileReport(

df,

title=f'Profiling for {table_name}',

infer_dtypes=False,

correlations=None,

minimal=True

)

return report

# Set variables

schema_name = 'stage'

table_name = 'customers'

# Build sample DataFrame

df = spark.table(f'{schema_name}.{table_name}').limit(10000)

# Generate report

report = profile_spark_dataframe(df, table_name)

# Convert report to HTML

report = report.to_html()

# View report

displayHTML(report)

# # Create a timestamped file name

utc_timestamp = datetime.now(timezone.utc).strftime("%Y%m%d_%H%M%S")

file_name = f'{table_name}_profile_{utc_timestamp}.html'

# # Set output path to save html file

output_path = f"abfss://xxxxxx@onelake.dfs.fabric.microsoft.com/xxxxxx/Files/data_profile/{file_name}"

# # Write file to lakehouse

mssparkutils.fs.put(

output_path,

report

)

If you'd like to learn more about how Lucid can support your team, let's connect on LinkedIn and schedule an intro call.